What does an analysis of variance tell you?

ANOVA stands for Analysis of Variance. It’s a statistical test that was developed by Ronald Fisher in 1918 and has been in use ever since. Put simply, ANOVA tells you if there are any statistical differences between the means of three or more independent groups.

How does an ANOVA work?

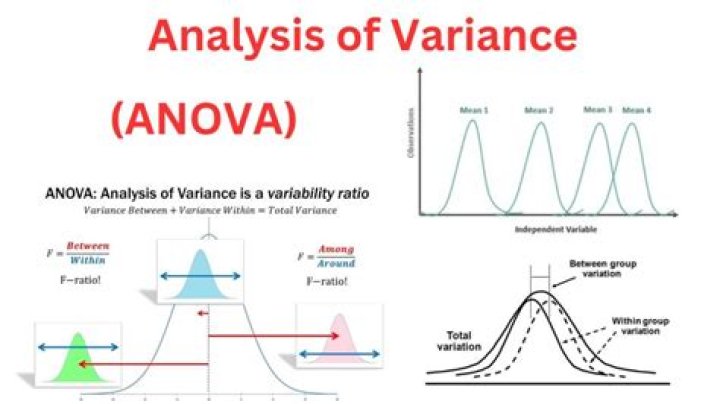

ANOVA is used to compare differences of means among more than 2 groups. It does this by looking at variation in the data and where that variation is found (hence its name). Specifically, ANOVA compares the amount of variation between groups with the amount of variation within groups.

How is analysis of variance calculated?

Steps for Using ANOVA

- Step 1: Compute the Variance Between. First, the sum of squares (SS) between is computed:

- Step 2: Compute the Variance Within. Again, first compute the sum of squares within.

- Step 3: Compute the Ratio of Variance Between and Variance Within. This is called the F-ratio.

Why do we use ANOVA?

You would use ANOVA to help you understand how your different groups respond, with a null hypothesis for the test that the means of the different groups are equal. If there is a statistically significant result, then it means that the two populations are unequal (or different).

Why is it called analysis of variance?

It may seem odd that the technique is called “Analysis of Variance” rather than “Analysis of Means.” As you will see, the name is appropriate because inferences about means are made by analyzing variance. ANOVA is used to test general rather than specific differences among means. This can be seen best by example.

How do you analyze ANOVA results?

Interpret the key results for One-Way ANOVA

- Step 1: Determine whether the differences between group means are statistically significant.

- Step 2: Examine the group means.

- Step 3: Compare the group means.

- Step 4: Determine how well the model fits your data.

What is the f value in ANOVA?

The F value in one way ANOVA is a tool to help you answer the question “Is the variance between the means of two populations significantly different?” The F value in the ANOVA test also determines the P value; The P value is the probability of getting a result at least as extreme as the one that was actually observed.

What is difference between t-test and ANOVA?

The t-test is a method that determines whether two populations are statistically different from each other, whereas ANOVA determines whether three or more populations are statistically different from each other.

How do you know if ANOVA is significant?

In ANOVA, the null hypothesis is that there is no difference among group means. If any group differs significantly from the overall group mean, then the ANOVA will report a statistically significant result.

Why does ANOVA look at variance?

So, when we divide them up in a way that matters, we get huge variation between and little variation within. When we divide them in a silly way, we get huge variation within and little variation between. That’s why we look at variances to compare means.

What is the F value in ANOVA?

How do you interpret F value in ANOVA?

The F ratio is the ratio of two mean square values. If the null hypothesis is true, you expect F to have a value close to 1.0 most of the time. A large F ratio means that the variation among group means is more than you’d expect to see by chance.

What is a good F value?

What is the F critical value?

Critical F. Critical F: The value of the F-statistic at the threshold probability α of mistakenly rejecting a true null hypothesis (the critical Type-I error).

Which is better ANOVA or t-test?

There is a thin line of demarcation amidst t-test and ANOVA, i.e. when the population means of only two groups is to be compared, the t-test is used, but when means of more than two groups are to be compared, ANOVA is preferred.

What is the difference between t-test and F test?

T-test vs F-test The difference between the t-test and f-test is that t-test is used to test the hypothesis whether the given mean is significantly different from the sample mean or not. On the other hand, an F-test is used to compare the two standard deviations of two samples and check the variability.

How do you know if F value is significant?

If you get a large f value (one that is bigger than the F critical value found in a table), it means something is significant, while a small p value means all your results are significant. The F statistic just compares the joint effect of all the variables together.

Why is it called Analysis of Variance?

How is variance calculated in ANOVA?

and is computed by summing the squared differences between each treatment (or group) mean and the overall mean. and is computed by summing the squared differences between each observation and the overall sample mean. In an ANOVA, data are organized by comparison or treatment groups.